Fine tuning LLMs to transcribe handwritten letters

The Mary Hamilton Collection within Manchester Digital Collections is a vast treasure trove of handwritten letters between Hamilton and her various peers, friends, family and associates. A popular and well-liked lady, living around the end of the 18th century, she conversed through writing prolifically; she was someone we might today term a social media influencer.

Professor David Denison has worked tirelessly with his team to transcribe these letters so that their content can be analysed and understood by researchers. It is a continual working in progress, meticulously reading the handwriting of individuals ranging from elegant cursive script to scrawls on scraps of old paper. Although David and his team are the experts who perform this transcription by hand, it would be useful to have an automated transcription starting point, especially when text is at the extremes of being very high quality or very poor quality. In the former, much of any automated transcription may be accurate already; in the latter, perhaps some insight into what the prose says might be valuable.

LLMs for image recognition

Several LLM models have support for image recognition, including open source or ‘freely available’ models such as Llama and Gemma. This varies in quality and accuracy, and highly generalised; they have to cope with “How many cats are in this picture?” through “Describe the emotional tone of this painting” to “Is there explicit or inappropriate context in this photo?”. This makes their accuracy on specific tasks like transcription quite poor; even if they are able to read the handwriting, they may misunderstand what they are being asked to do. Additionally, superfluous introductory and explanatory text (“Sure, I can help you with that”) tends to get spat out merged into any transcription.

Fine tuning models

Large models take months to train on vast quantities of computing resource. Even training GPT-3 — a relatively old LLM — on a single NVIDIA V100 such as those in the Research IT Shared Compute Facility is estimated to take around 355 years! Companies such as OpenAI, Google and Meta, poor their computing resource into parallelising as best as they can, to bring this time frame down to months, which is of course still a very long time.

However, whatever the task, the vast majority of training intelligence remains constant between request. Think of it this way: once you have learned to read English, you can read many different books, signs, and probably write English yourself too. You don’t have to ‘re-learn’ for each new book, nor for each new letter you want to write. We call this generalised learning, where you can take existing learning and apply it to new situations.

It turns out the training AI models can be similar. 99% of the training of a model is teaching it very low-level concepts such as “What is a character”, and “How to characters conceptually come together to form words”. In an image context, low-level learning might be something like, “What is a line” or even “What is a red pixel next to a yellow one”. Then higher layers combine these low-level rules: “What is a line” allows for “What is a curve”, allowing “What is an arc”, then “What is a circle”, “What is an eye”, “What is a face”, “Is this a face”, “Who’s face is this?” etc.

So rather than retraining a large model from scratch, often you can just retrain these higher level layers to meet your requirements. As with all training, though, to do this you need existing training data. The model can then be “fine tuned” on this training data, retaining its low-level knowledge and become a specialist on this specific new data; slowly it un-generalises and becomes poor at general image recognition and much better at a single specific task.

Coinciding streams

In the AIIA we are always looking for new projects, and I wanted to explore fine-tuning to learn how to do it myself. Of course, learning via a real project is far more enlightening than just creating dummy projects, so the Mary Hamilton transcription seemed to fit this perfectly. David and his team had already transcribed a large number of letters, and we could use these as training data to fine tune a large model to transcribe further letters. I was sceptical that a general LLM would be able to read handwriting, but no harm in trying.

It was happy coincidence that my own stream of learning and David’s requirements happened to align at this time.

Quality and quantity

When fine tuning a model like Llama or Gemma, the longer you spend training on existing data the better the model will learn to perform on its new specialist task. Or at least that’s the theory! In reality, I found that most models were in fact remarkably good at reading handwriting already, and just needed a bit of nudging to get the format and output correct. So rather than fine-tuning to read handwriting, we were mostly fine-tuning to control output, and understand conventions and abbreviations used in 18th century English that have are no longer in common usage. After a relatively small number of examples, diminishing returns started to show, meaning that further training achieved minimal improvement.

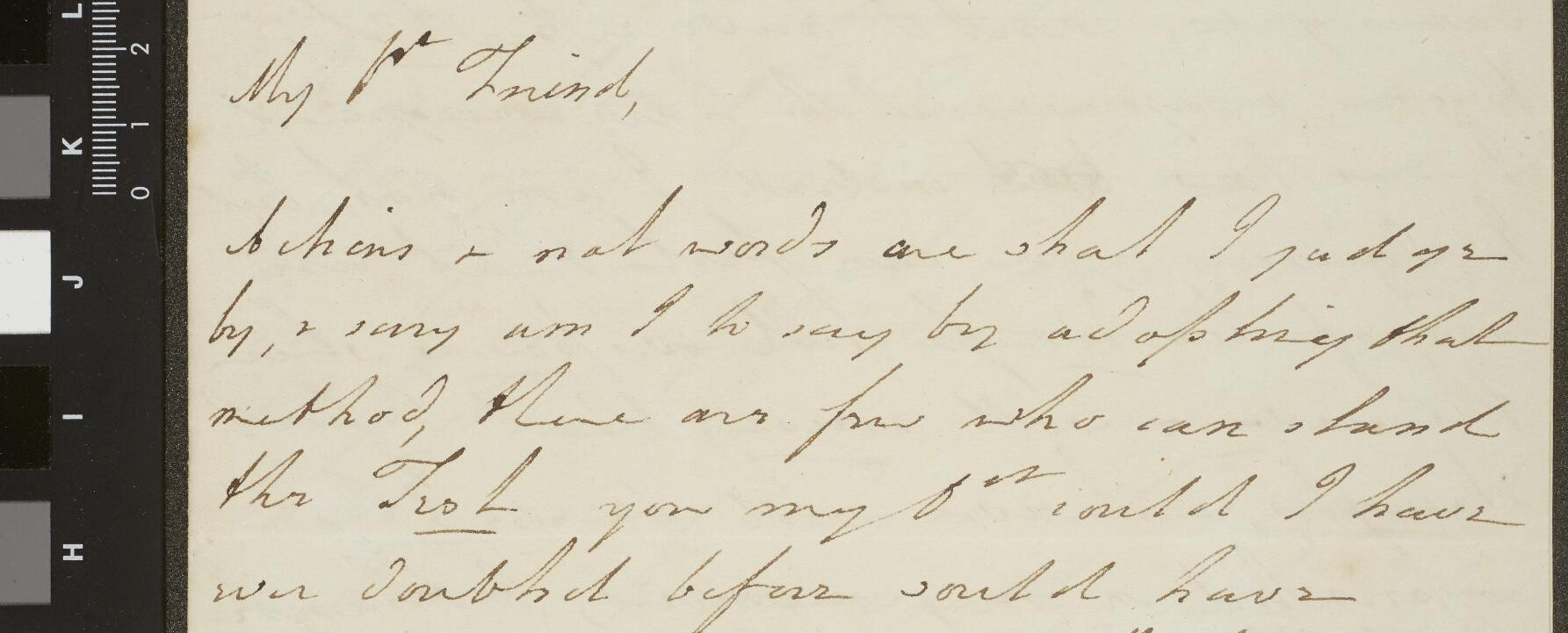

Consider these outputs for different training quantities on this letter:

After fine tuning on one single example

The image displays an aged letter on yellowed paper, with a transparent tape covering its edges.

The letter is written in cursive and is penned in a dark brown or sepia-colored ink. It begins:

“My Dear friend,

Beneath these words one shall find the words of an old, but most sincere, method by which you may be sure the one or few who can shan’ the method of life you my son much more power over your affections and friend’ly advice by the method you wish this epistle was, in the really, outpoured my heart is, I must not fear for me but also for my children a day or much as wished for you can no more say, as I did I know how far I had to have and when it so chance to amire my fortune half.”

The writing continues from here.

At the top of the page, a few illegible words or initials are inscribed in ink. In the upper right corner, the numbers “11 8 [illegible]” and the year “18[illegible]” are also written in ink. A gray marker note is scrawled above the topmost line of words.

A black strip on the left side of the image displays a white ruler graphic with white text in small letters. This graphic appears to be part of a film reader tool. The paper, in turn, rests in a black frame that includes the film reader strip.

After fine tuning on 5 examples

My dear Friend,

Dutchess, I must needs be sorry you were

by, and say arm I’ll say by, adieu fairey, Fad

method, lame are few who can stand

the ordeal you my son might have

ever dared before could have

convinced me of your affection small

Friendly anxiety, by the method you

read this afternoon, it willingly

overpowered me; I did not dare

trust myself to ask million many

questions or say, so much as wished

for, & told, I saw real signal; & I

know Le had your orders so strictly

I summoned my fortitude, HalfIn Attendance

at your Marriage

Mr. Henry Wyllis

In Attendance

at your Marriage

Mr. Henry Wyllis

After fine tuning on 50 examples

accord to the Royal

cladding

My dear Friend,

behind + not words are what I just now

by, + my arm + in every part

method there are two who can thank

the Lord you may yet I might I have

were I allowed before could have

convinced me of your affection & not

Friendlily anxious by the method you

proud this sickness in which usually

overpowered me + I did not share

treat myself to ask William many

questions or say so much as I wished

for I felt I was not equal, & I

could he had yours with as strongly

we commenced my countenance.

After fine tuning on 100 examples

My dear Friend,

dichons + that words are what I put you

by, + very warm to be sure, by all the king that

method there are few who can thank

the Lord you may, that much I have

ever subscribed before would have

encouraged me of your affection & real

Friendly anxiety by the method you

took this Morning, it really

overpowered me, & I did not save

trust myself to ask William many

questions in say so much as I wished

for I felt I was not equal, & I

hope he had your orders as strongly

to enumerate my Countenance, that

It is unlikely that further training will dramatically improve the transcribed text; the handwriting is simply too complex for the lower levels of the model to interpret. However, it is clear that:

- the transcription it has made is remarkably good, considering the source image that most humans struggle to read

- the improvement between 50 examples and 100 examples is relatively small, demonstrating the diminishing returns of further training.

The proof of the pudding is in the eating

After fine tuning the model on a good selection of high quality transcribed letters of all styles, I was confident that the model were now good enough to use for real on some new material.

Here are two examples (one relatively easy to read, one much less so), and they corresponding transcriptions from the model:

My dear Miss Hamilton. This little

papper makes no pretensions to a letter

it only serves to convey You my

sincere good wishes upon Your journey

may every happiness attend You —

Where as well as every where else,

and may the Sea air prove beneficial

to You and all Your companie de voyages

is the very sincere wish of

Dear Madam

The story that you

&c. is extremely pretty — There was once a

Fairy Beauty who had a wicked Wife

who pleased him all day long

One day the poor Fairy must come

to go to the fairy Queen Room to do so, but

Queen burned it down out of envy

For the sake such a pretty life

him was not let to Govern such

a Sensi country for penny was a

Maid of it man & the Queen

begged to pardon and to my surprise

Friends even began to len len for & sent

Them ever affection

Note that in the above, the line lengths may have been word wrapped by WordPress. The original transcription lines match up to the image text.

David suggested a large batch of letters that were about to be transcribed, and I ran them through the newly trained model, with very promising results. I sent the transcriptions through to David and his team, and they found them extremely useful as a starting point for their manual transcriptions.

This was a great result, as my initial scepticism had been proved unfounded, and the model performed far better than I had hoped, with real tangible benefit to a research team.

Lessons learned

I was surprised at just how good the model performed transcribing handwriting. The example given above was deliberately chosen to be on the harder side of the spectrum, but other letters which were written in more clear script were transcribed almost perfectly. There were a few common issues that I noticed:

- The model started to learn people’s names, locations, addresses, titles etc. This was a double edged sword: it meant that the model could speculate on what a name was likely supposed to be, but sometimes that speculation was simply wrong. This wasn’t by design but just inherent to the way that fine tuning doesn’t really understand words so much as learn from what it sees.

- The model struggled greatly with split-page letters, even when trained exclusively on them. In the end I had to write a second much small model to detect double-page spreads and split them into 2 sides first.

- I experimented with fine tuning both the models from Llama (Meta) and Gemma (Google), and there wasn’t much difference in the quality of the outcome. The parameter size was far more important, and models with less than around 14B parameters performed quite poorly.

- The fine tuning on one very specific set of letters and styles meant that the model understandably doesn’t generalise to other letters and styles. As an experiment I tried running the Simon Papers through it (a completely different style, and mostly in German), and the output was largely nonsense. It would be interesting to fine tune using a much wider variety of sources to try and create a slightly more generalised transcription model; this could then be compared to commercial systems like Transkribus.